How Apple Intelligence Works

This week in AI: With a guest star (former TAUS CTO), we only talk about Apple Intelligence, how it works, and how we should be thanking Apple for protecting our data in the age of AI.

This week in AI, we are only talking about Apple Intelligence launched at WWDC. Watch the video on Youtube.

There were a lot of updates this week, including:

Stability AI releases a sound generator

Asana is launching AI teammates to work alongside humans

Mistral AI raised a 640 million Series B round, and

Meta launched AI powered tools for businesses in WhatsApp.

However, the biggest was the announcement of Apple Intelligence, and there is just so much to unpack here.

Advancement #1: Apple Intelligence is Launched

Apple's highly anticipated WWDC took place on Monday, unveiling feature updates such as the new macOS Sequoia, Tap to Cash (which is similar to Venmo but between devices), amazing updates to Siri that actually finally makes her a helpful assistant, and more. But what makes Apple Intelligence so different in this launch? It is by far the most advanced AI architecture for data privacy protection that exists for the every-day user, and sets a new standard. Let's start with how it works.

Apple traditionally has had a strict on device first policy for data privacy protection, and while they built an AI infrastructure to try to have AI models run on devices as much as possible through Apple Silicon, Apple Intelligence leverages both on device and cloud computing models. Since Foundation models are pushing what can be done on device because of their size, this was something that was probably unavoidable.

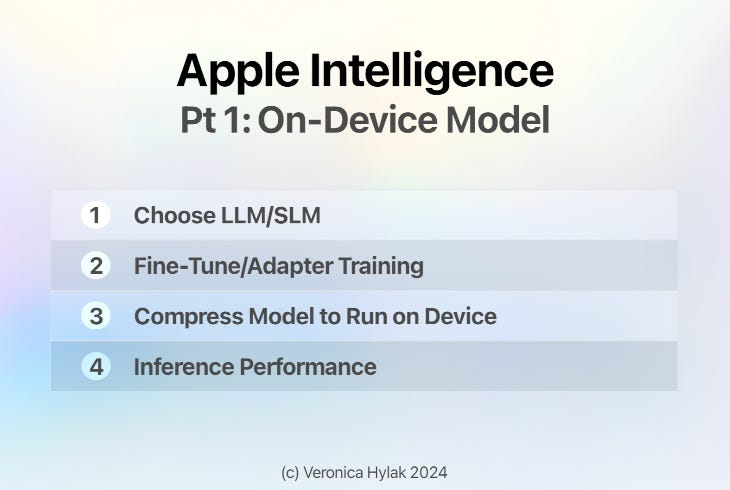

Part 1: On Device Models

Let's first take a look at their on device infrastructure and training strategy, used for both their LLM and their diffusion model.

Step One: Choose Model

Apple started out their infrastructure by choosing an LLM that could run on device, choosing based on specialization, size, and performance.

Step Two: Multiple Training Methods

The training process was handled in two halves. First, they utilized traditional fine-tuning, conducting multiple training passes on the model to specialize it in specific tasks such as text summarization, proofreading, quality handling, email replies, urgency, friendliness, and more.

After traditional fine tuning, Apple adopted a new approach to fine-tuning using a technique called adapters. Adapters are a small set of model weights that overlay onto the common base foundation models and can be dynamically loaded and swapped, allowing the base model to specialize on the fly for different tasks.

Step 3: Compressing the Model to Fit on a Phone

Their third step involved compressing the model, reducing the model size to fit on devices by compressing it from 16 bits per parameter to less than four bits per parameter, ensuring it can run on Apple devices while maintaining its quality.

Step 4: Inference Performance

Optimizing models to minimize the time required to process a prompt and generate a response.

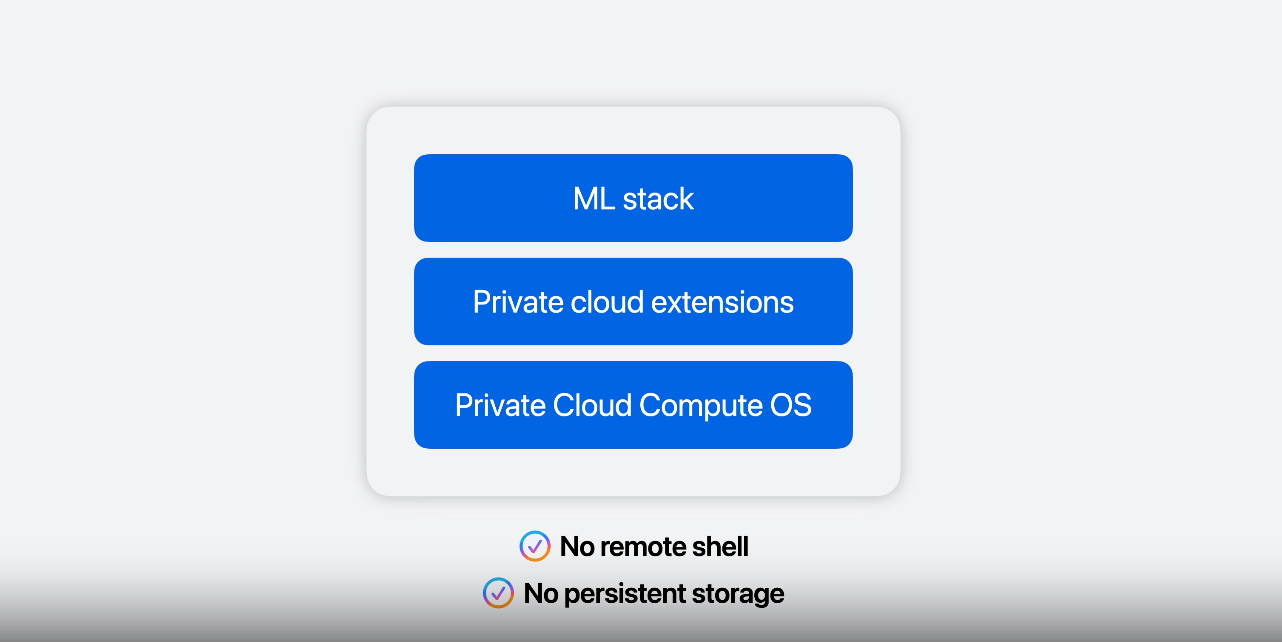

Part 2: Leveraging Larger Models with Private Cloud Compute

Now, despite their impressive efforts to do what they could on device, the full powers of LLMs and foundation models in general can't be harnessed on a single device, and with Apple intelligence, they have moved away from their strict on device policy, extending Apple intelligence to the cloud with a new infrastructure called Private Cloud Compute.

Their new system extends Apple's privacy protections to servers designed for private AI processing. The Private Cloud Compute OS runs on a new operating system with:

No permanent data storage or remote access (including no access through a remote shell), helping to prevent unauthorized data access;

A complete machine learning stack for the actual execution of AI tasks

Protection of important encryption keys and can verify the identity of a private cloud cluster or instance before sending requests.

All of this being established through an end to end encrypted connection to a user's private cloud compute instance, and ensuring that only your private cloud instance can decrypt the requests data. The data is not stored after the request is processed, and therefore not accessible to Apple or anyone.

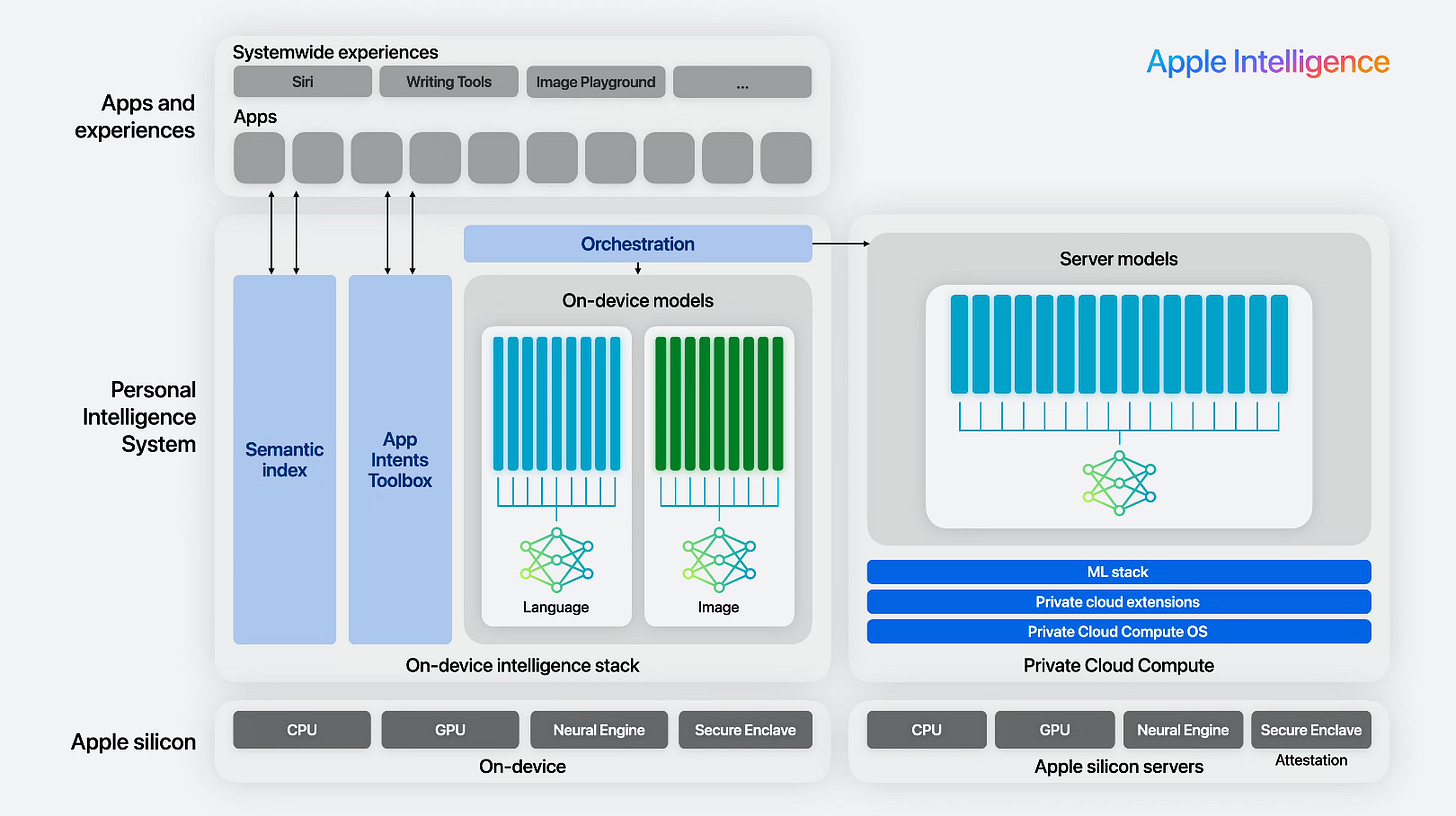

Putting Both Halves Together: The Full Infrastructure of On-Device, Private Cloud Compute and Apple Intelligence Orchestration

Now that we understand the on-device infrastructure, as well as the cloud compute infrastructure, how do they all work together to bring us Apple intelligence?

1. When the request comes through, Apple Intelligence orchestrates and decides which model to use, whether it be on device model or the cloud models through your private cloud compute.

2. Then it leverages your personal intelligence stored on device (not on the cloud) in order to help aid with execution utilizing your personal intelligence system.

3. Your personal intelligence system includes an On-Device Semantic Index (organizing personal information across your apps to provide personal context) and an App Intents Toolbox (that helps Apple Silicon to understand the parameters and capabilities of what your applications can do).

Other Apple Intelligence Announcements

They also announced at the end an Open AI partnership, which the details of that is a little unclear at this time (but is strictly opt in and for those worried about data privacy, confirmed by Tim Cook in an interview most likely will not adhere to the strict privacy policy utilized by Apple Intelligence.

WWDC also announced significant enhancements around Siri, and you should watch the full video to really see it in action, but if you've been with me since last month, you wouldn't be surprised. We covered a research paper last month about their ReaLM model that gave Siri a huge upgrade.

My Initial Thoughts

Because everyone here knows how crazy I am about data privacy. Brace yourselves. What I'm about to say is going to shock you.

In my opinion, this is by far the most advanced AI architecture for data privacy protection that exists for the everyday user. I’m honestly so excited about it, and considering upgrading my phone so I can leverage it. I am not scared to use Apple devices and while I (of course) have hesitancy, I also feel comfort in the fact it’s the best infrastructure available to me to date.

I brought in a colleague of mine to give his own opinion on the matter, so tune into the video if you want to hear our dialogue (including questions of who’s going to pay for this increase in cloud costs).

I'm really excited to step for data privacy. Apple intelligence may look to many to be a boring infrastructure update that no one really cares about, but in reality, Apple took the first major step to protect us.

If you know me, that is an endorsement I do not give lightly.